Short Bio

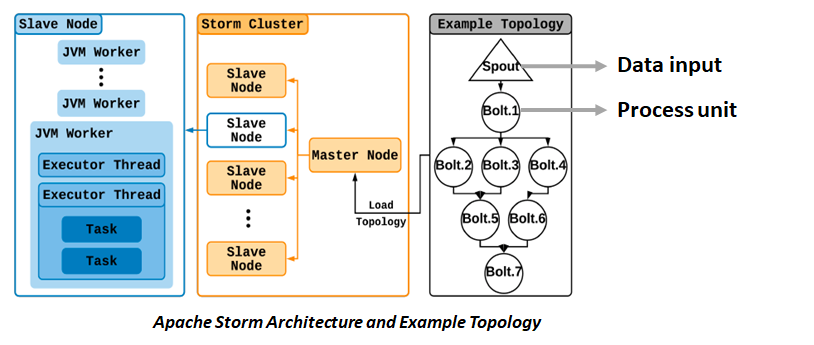

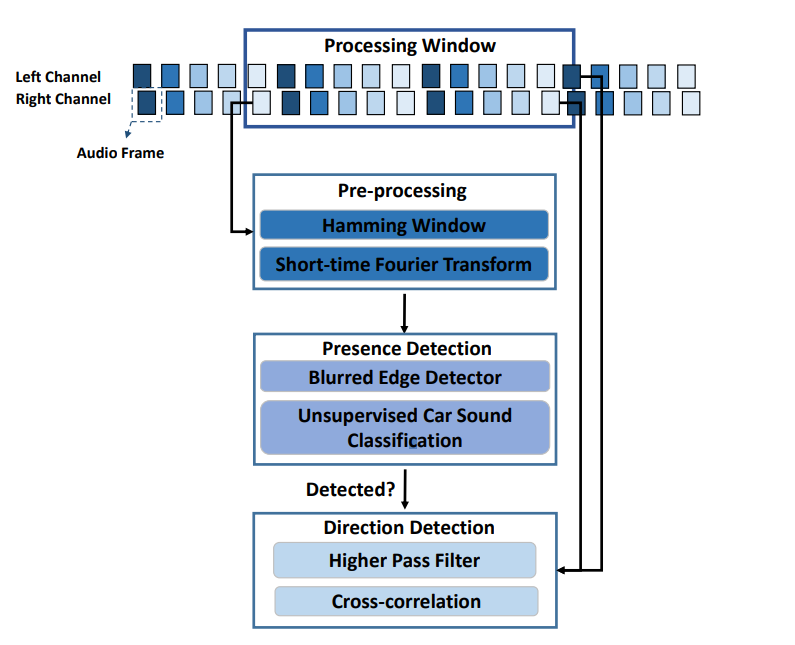

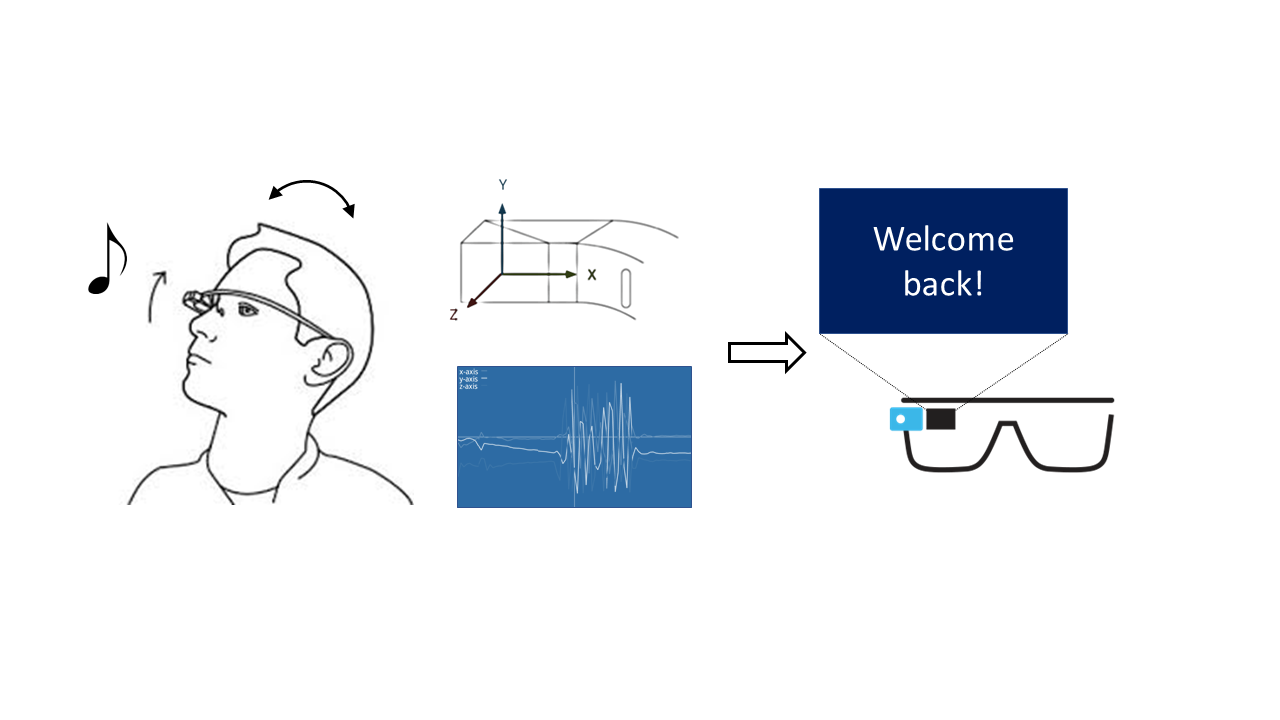

Sugang Li is currently a Software Engineer at Google Kubernetes Engine Networking. Sugang received his M.S. and Ph.D. from WINLAB, Rutgers University, where he closely worked with Prof. Yanyong Zhang and Prof. Dipankar Raychaudhuri. His research focuses on IoT protocol design over Future Internet Architecture, edge/mobile computing, and machine learning. His works combine practical system building and theoretical analysis. The ultimate goal of his research is to bridge the gap between sensing applications and computing and networking infrastructure. His works have been published in top conferences such as ACM UbiComp/IMWUT, IEEE Infocom, IEEE PerCom, ACM SenSys, with over 500 citations and h-index of 10. Before coming to Rutgers, he received his B.E. in Biomedical Engineering from Southern Medical University.

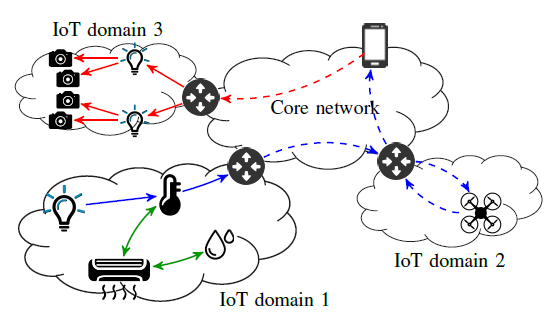

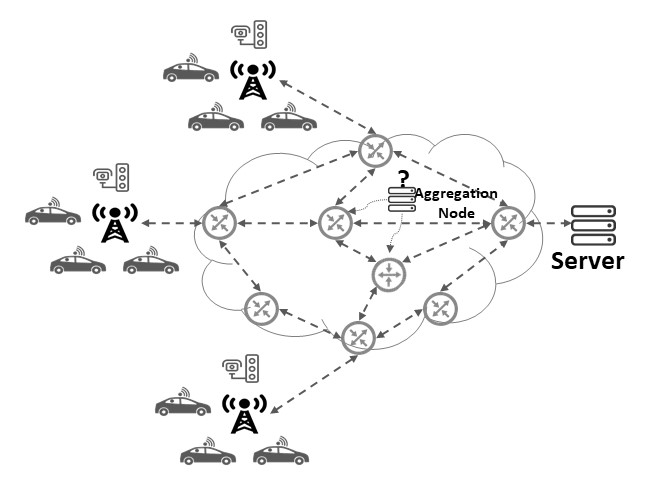

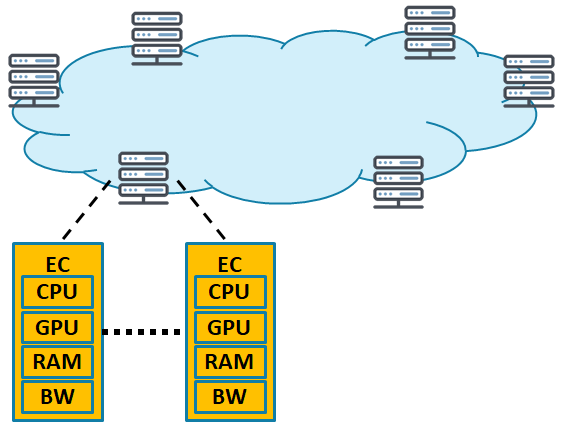

IoT, 5G, Mobile/Edge Computing

IoT, 5G, Mobile/Edge Computing